This is the third and final post of an article discussing the amount of luck in determining outcomes in the NFL. In the first post, I compared the actual distribution of team win-loss records over the past five seasons with an idealized pure luck distribution. I found that only 78 out of 160 actual season records (48%) differed from what we’d expect if the NFL were determined completely by luck.

In the second post, I compared the actual distribution with an idealized distribution of records in a theoretical league governed by “pure skill.”

In this post, I will unify the three distributions--actual, luck, and skill--into one algorithm. The resulting equation reveals the proportion of NFL games in which the deciding factor is luck and not the camparitive strength of each opponent.

LUCK, SKILL, AND OBSERVED

The chart below is a histogram of the pure binomial distribution, the simulated pure-skill (zero luck) distribution, and the actual distribution of NFL records since the '02 expansion. (Pure luck is blue, actual is yellow, and pure skill is red.)

When I first examined the three distributions together, I was struck by how the actual distribution appeared to split the difference between the luck and skill distribution. The actual records appear to be some sort of combination of the luck and skill distributions. To me, it looked as if the pure-luck binomial distribution was pressed into a flatter and wider distribution by skill.

LUCK/SKILL SYNTHESIS

It dawned on me to create another simulation, one that synthesized the pure-luck and pure-skill distributions together in varying degrees. (10% luck/90% skill, 20% luck/80% skill, etc.) Basically, the luck% variable determined a percentage of games (chosen at random) to be decided by pure luck, essentially a coin flip. The remainder of the games were credited to the superior team. The simulation algorithm looked like this:

If rand() < %luck, then game outcome = pure luck, else game outcome = win by the better team

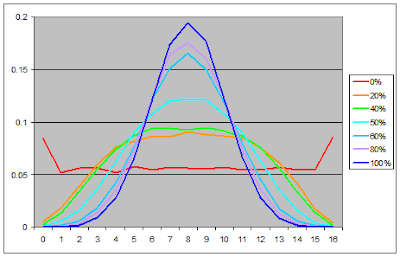

I varied the %luck value between 0 and 1, re-running the simulation. Here are some representative win distributions (legend is in %luck):

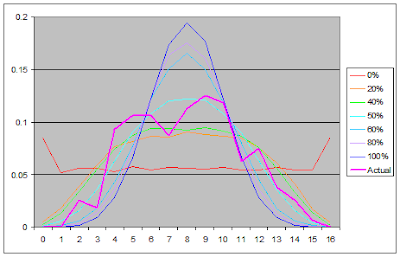

Next I overlayed the actual distribution.

PROPORTION OF LUCK IN NFL OUTCOMES

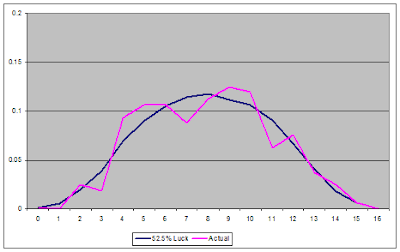

Then I varied the %luck value until it maximized the goodness of fit between the actual distribution and the synthesized distribution. At 52.5% luck, the theoretical distribution is statistically indistinguishable from the actual distribution (chi-square goodness of fit p=0.94). This means it is 94% probable that the discrepancies between the synthesized simulation and the actual observations are merely due to sample error.

THEORETICAL MAXIMUM PREDICTION RATE

I will be very careful in stating what conclusion I draw from this exercise. The actual observed distribution of win-loss records in the NFL is indistinguishable from a league in which 52.5% of the games are decided at random and not by the comparative strength of each opponent.

I admit 52.5% seems very high. But keep in mind, that half of the time, the better team wins by luck. In other words, half the time our prediction models are correct by chance, just like a monkey picking winners would be. If the 52.5% figure is correct, the best any prediction model could do is:

0.50 + 0.525/2 = 0.76

So 76% correct would be the theoretical ceiling for NFL game prediction models. This is consistent with the various computer models as well as odds makers. It is also consistent with our intuitive experience--upsets seem happen about a quarter of the time. Sometimes a model (or a person) can predict at better than a 76% correct rate, but anything above that would be...by luck.

I also posted a follow up to this series of articles here.

I was wondering about the following: With your logistic regression models, you get probabilities that Team X will win. Even if Team X has 90% probability, Team Y will win 1 out of 10 games. And in one article, you showed that a lot of the games have somewhere between a 40/60 and 60/40 P(win) split. So they're pretty close to random. Very few games get classified as 20/80 or 80/20. If you ran a simulation based on that distribution of predicted probabilities of winning, where each outcome was random but biased towards the better team, would you get a similar distribution?

If 76% of games are won by the better team, shouldn't our accuracies be better? My feeling is that team quality isn't transitive in the sense that "P(Team A beats Team B) > 50%, P(Team B beats Team C) > 50%, P(Team A beats Team C) > 50%." It's like Rock-Papers-Scissors. Team A might have the strengths to exploit some of Team B's weaknesses, but in a 32-team league, Team B might be better against the other 30 teams on average. I have no idea how you would actually model that in a simulation, though. It might be a question of how many games get predicted as being somewhere between 40/60 and 60/40, where the skill levels are very close.

On your first suggestion, I just ran the 2007 season through the logit model (with 2006 team stats) to generate win probs for each game. Then, as you suggested, I ran a simulation of the season with random outcomes biased in proportion to the logit win probabilities. The resulting simulated distribution is very, very close to the actual distribution from the last 5 seasons. If anything, it looks a little taller, indicating slightly more luck is in the prediction model than reality. But that would be expected since the logit model does not consider all factors, nor claims to. Great suggestion.

(One very interesting thing I noticed was how dramatically some of the teams fared from simulated year to simulated year. Given the same team strength, and the same schedule, team records would swing up to 5 or 6 wins apart.

I agree with you on the transitiveness of football. To some degree, there is a unique interaction between opponents. I think if I pull the string on that, it could be a flaw in my concept of a pure-skill league. You are suggesting is isn't quite that linear.

I do have an interaction model for game prediction that might capture some of the effect you mentioned. I have a post back in Jan or Feb about it. It's about as accurate as the logit model I've primarily used. They both produce very similar probabilities.

Brian. I appreciate your efforsts and your work is very unique.

Could you clear up a question regarding the % of luck in NFL games.

If NFL appears to be a 52.5 % luck league. And the luck is spread equally between teams. doesn't It follow that the thoerectical celing for any favorite in any one game be

(52.5/2) + (48.5) approx. 74.25%

(And this would be reserved for the most lopsided match /up of #1 team vs #32 team). If this is true m I missing something?

How is it possible that a fav. team in your prediction

Brian, I appreciate your work. Great sight! Very informative, and unique prespectives.

A question about the role of luck?

Doesn't it follow from your conclusion that there is a theoretical celing on the % any favorite may have in a game and that celing is ( 52.5%/2) + 48/5% approx. 74.25%. Also that this would be reserved for the most lopsided matchup of team #1 vs. team #32. If this is true how did NE come out as a &6% fav. for SB?

Just wondering if im missing something?

Dan-

Good question. What the results suggest is that 75% of all NFL games are won by teams that are not "the better team." It suggests a theoretical maximum to how well any prediction model can do, i.e. identifying which team truly is the "better team."

I'm not saying that the underdog has at least a 25% chance of winning any game, no matter how big the mismatch is. I'm just saying that on average the better team wins 75% of all games.

So a game forecast at 85/15 in favor of the stronger team is still very possible. But there are many 55/45 type games too, so on average the true favorite wins about 75% of the time.

Brian;

Have you quantified the maximum benefit (in terms of points) that a team can receive from this randomness/luck in a particular game?

Is it possible in your prediction modeling to have a ' sure thing' - a game that is projected (100% -0%)where the skill differential between the two teams is so great that even if the less skilled team received the maximum benefit from this randomness it would not determine the outcome?

(bt the way, what was your most lopsided matchup of the year - Miami vs Ne?)

With random

Does the distribution of randomness or luck that a team recieves over a course of time even out?

In other words, is it possible for one team to continue

to recieve 'more' luck than others?

or is the luck a team recieves independent of what happened the game(s) before?

thx

Dan

Brian;

Have you quantified the maximum benefit (in terms of points) that a team can receive from this randomness/luck in a particular game?

Is it possible in your prediction modeling to have a ' sure thing' - a game that is projected (100% -0%)where the skill differential between the two teams is so great that even if the less skilled team received the maximum benefit from this randomness it would not determine the outcome?

(bt the way, what was your most lopsided matchup of the year - Miami vs Ne?)

Dan-No, I haven't quantified the points a team could receive in any single game.

It can never get completely to 100% certainty, simply based on the math involved. In theory it could be 99.9 or something, and round to 100 though.

The biggest split in '07 was OAK at JAX (.03 vs. .97). There were two games at .05 to .95--OAK at SD and KC at IND.

Brian,

thanks.

One further question.

Most prediction models

account for home field advantage.

however, this advantage is not part of skill, nor part of luck.

therefore doesn't this open the door for another possible category (beyond skill and luck)(i.e.advantages)

that account for a teams victory?

How do you account for home field advantage?

To summarize..If it is generally accepted that we allow for one type of advantage (namely home field)

that can work its influence on Skill and luck in the outcome of a game.

Why not other non skill non luck advantages.(motivation, for instance)

dan

Dan-That's a good point about home field. My logit model does include HFA, but I wasn't sure how to account for it in the type of analysis I did in my post. I suppose in theory it would reduce the amount of apparent randomness slightly, especially when opponents are closely matched.

Motivation level is another good question. My gut feeling is that a 16 game season, with 6 days between games, leaves little possibilities for unmotivated teams. Almost every play is meaningful, even to losing teams, when million dollar contracts are on the line. On the other hand, there are a handful of games in the final week or two of each season in which playoff teams rest their starters. That might also add a game or two of 'apparent' randomness.

This is one of my favorite articles in the history of this site. Breaking down the true percentage of outcomes based upon skill compared to those based on a randomly assigned luck factor for each opponent is genius. Incredibly helpful as I am now considering the same for mixed martial arts.